Deepfakes have manipulated countless number of people on social media. A recent deepfake video that made the rounds showed Ukrainian President Volodymyr Zelensky urging his troops to surrender to Russian forces. Deepfakes also played an integral role in the 2016 U.S. presidential election, as a Russian troll farm deployed over 50,000 bots on Twitter, spreading disinformation and urging followers to support Donald Trump. But how easy is it to figure out when a video is a deepfake or when it’s legit?

In a new study, University of Sydney neuroscientists dug into artificial intelligence-generated fake faces, and found that people’s brains can detect deepfakes — even though individuals themselves could not verbally report which faces were real and fake.

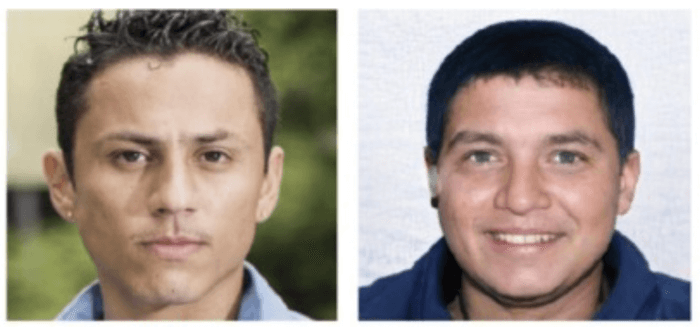

Australian researchers conducted two experiments: one behavioral and one using neuroimaging. Participants in the behavioral experiment were shown 50 images of real and computer-generated fake faces. The participants were then asked to identify which were real and fake.

A separate group were shown the same images while their brain activity was recorded using electroencephalography (EEG), without knowing that half the images were fakes. When analyzing participants’ brain activity, researchers found deepfakes could be identified 54% of the time. When participants, though, were asked to verbally identify the computer-generated faces, they could only do it 37% of the time.

“Although the brain accuracy rate in this study is low — 54 percent — it is statistically reliable,” says senior researcher Thomas Carlson, an associate professor for the University of Sydney’s School of Psychology, in a statement. “That tells us the brain can spot the difference between deepfakes and authentic images.”

Researchers believe their data can help combat against deepfakes.

“The fact that the brain can detect deepfakes means current deepfakes are flawed,” explains Carlson. “If we can learn how the brain spots deepfakes, we could use this information to create algorithms to flag potential deepfakes on digital platforms like Facebook and Twitter.”

Researchers even believe future technology could be created to alert people to deepfake scams in real time. This could include security personnel wearing EEG-enabled helmets alerting them they might be confronting a deepfake.

“EEG-enabled helmets could have been helpful in preventing recent bank heist and corporate fraud cases in Dubai and the UK, where scammers used cloned voice technology to steal tens of millions of dollars,” says Carlson. “In these cases, finance personnel thought they heard the voice of a trusted client or associate and were duped into transferring funds.”

Carlson says more research needs to be done on detecting deepfakes.

“What gives us hope is that deepfakes are created by computer programs, and these programs leave ‘fingerprints’ that can be detected,” says Carlson. “Our finding about the brain’s deepfake-spotting power means we might have another tool to fight back against deepfakes and the spread of disinformation.”

The study was published in the journal Vision Research.

-392x250.jpg)